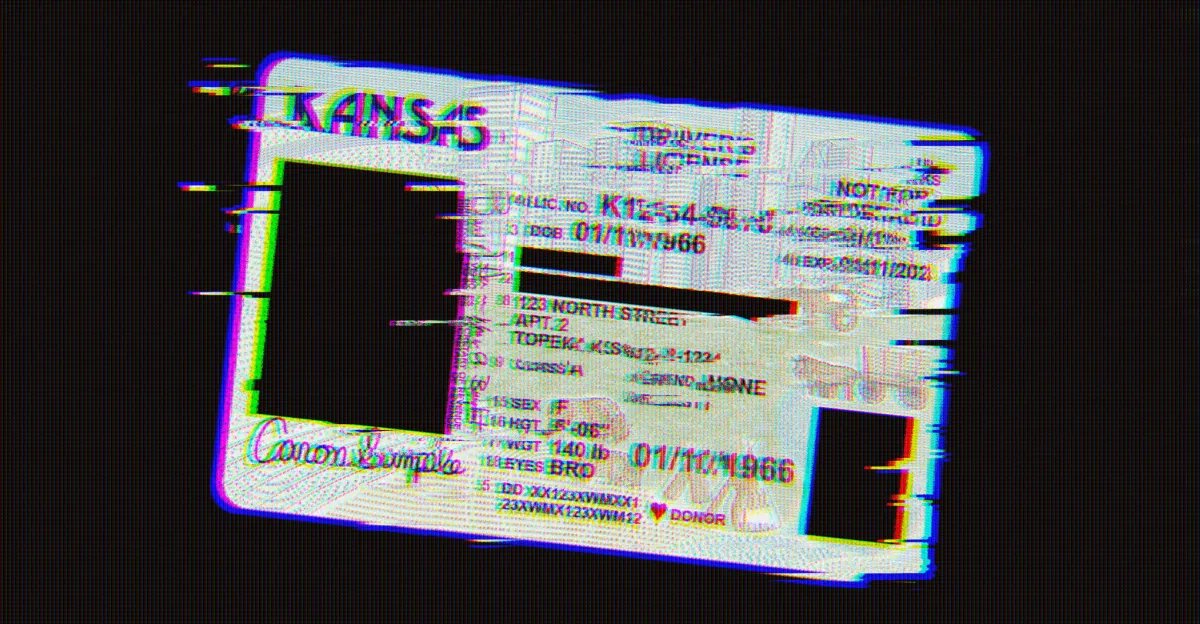

In 2026, a photo ID is not just paperwork — it essentially grants you permission to exist in society. Last month, Kansas legislature passed a law categorically invalidating trans people’s driver’s licenses and IDs overnight, requiring them to obtain new IDs with incorrect gender markers. Now, with a slew of online “Age Verification” laws requiring online platforms to perform digital identity checks, tech policy experts warn that the inherent dangers are being expanded onto the internet, where biased automated systems threaten to expose and lock trans people out of websites, public services, and apps.

As of March 2026, over half of US states have passed an “Age Verification” and “Digital ID” law. These verification systems (sometimes called “age-gating”) add a new dimension to problems that trans people have been dealing with for decades.

“This is yet another step in requiring people to identify themselves everywhere, in physical and online spaces, as their so-called gender assigned at birth,” Dia Kayyali, an independent tech and human rights consultant, told The Verge.

As many have pointed out, having an ID that doesn’t match your appearance or lived reality is not a matter of pronouns or “validation,” but one of material consequence: it prevents trans people from freely moving about the world without risking constant harassment, violence, and discrimination. Advocates For Trans Equality notes in its guide on trans identity documents that “incorrect identification exposes people to a range of negative outcomes, from denial of employment, housing, and public benefits to harassment and physical violence.”

In January 2025, the administration issued a broad anti-trans executive order claiming the federal government would only recognize a person’s “immutable biological classification as either male or female,” defying the overwhelming consensus of medical science, which shows that sex is neither immutable nor purely biological. In November 2025, the Supreme Court overturned a court order that had temporarily stopped the Trump administration from blocking gender changes on US passports. While the executive order wasn’t legally binding, the Supreme Court and the Kansas Department of Revenue are both following the instruction to the letter — and it’s likely more federal and state agencies will follow suit.

Automated online ID checking systems add new potential dangers to having a mismatching ID. Research shows that the very design of online ID checking virtually guarantees that trans people — and people of color — are experiencing issues disproportionately.

“These systems are specifically designed to look for discrepancies, and they’re going to find them.”

Digital ID and age verification services generally fall into two categories. The systems typically used by government agencies to verify identity (like ID.me, which is used in some states to verify benefits like SNAP) compare an uploaded picture of a person’s ID against information stored in a government database. Others mandate biometric scans and AI “Facial Age Estimation,” an unproven computer vision technique that claims the ability to determine age by analyzing facial features. This technique is based on facial recognition, and is currently being used by platforms like Meta, OnlyFans, and Roblox, where it’s being outsmarted by teenagers and is generally a huge disaster.

“Both approaches have issues and disproportionate failure rates for trans people,” Os Keyes, a postdoctoral fellow at the University of Massachusetts who researches algorithmic bias against trans people, told The Verge. Technical experts like Keyes have criticized these systems as inherently biased against trans people, whose identities don’t always fit neatly into government boxes, and whose facial features often change dramatically as a result of hormone replacement therapy (HRT).

“These systems are specifically designed to look for discrepancies, and they’re going to find them,” said Kayyali. “If you are a woman and anyone on the street would say ‘that’s a woman,’ but that’s not what your ID says, that’s a discrepancy.” The danger of these discrepancies extends not just to trans people, but to anyone else whose appearance doesn’t match normative gendered expectations.

“A lot of age estimation systems are built on a combination of anthropological sex markers and skin texture. This means they fall over and provide inaccurate results when faced with people whose markers and skin texture, well, don’t match,” explains Keyes. For example, one of the most prominent markers algorithms measure to determine sex is the brow ridge. “Suppose you have a trans man on HRT and a trans woman on HRT, the former with low brow ridges and rougher skin, the latter with high ridges and softer skin,” Keyes explains. “The former is likely to have their age overestimated; the latter, underestimated.”

Making this even more Kafkaesque is the fact that many of these systems are black boxes, and lack even a basic method of redress where automated decisions can be appealed — mostly because the age verification laws don’t specifically require them. Many of the laws, including in Kansas, incorporate language that only requires platforms to conduct age verification through “a commercially available database” or “any other commercially reasonable method” — to say nothing about the transparency or accuracy of the systems.

Kendra Albert, a technology lawyer and Partner at Albert Sellars, LLP, says that the open-ended language of the bills allows companies to avoid legal liability as long as they implement some kind of age-verification solution, regardless of its effectiveness or whether it has a way to appeal algorithmic decisions.

“In a lot of cases, it’s not saying you have to, it’s just saying you may be liable if you don’t,” Albert said. “That makes it harder to hold anyone accountable for the decisions to implement these tools, which are gonna have negative effects on particular populations of folks.”

This leaves many platforms seeking to remove legal liability by relying on third-party age verification vendors like Yoti and k-ID — many of which, Albert notes, usually disclaim liability for algorithmic decision-making as part of their Terms of Service. Smaller platforms that can’t afford these vendors, meanwhile, will either implement their own forms of verification (which also means they become responsible for securely storing extremely sensitive user data), or simply shut down to avoid the legal risk.

Using third-party vendors also means that companies may or may not be securely storing private data, or selling user information to other companies or the government. Last month, Discord, a platform hugely popular among LGBTQ+ gamers, ended its partnership with Persona, a Peter Thiel-backed identity verification company, after hackers revealed the system was sending the private data it collected to federal agencies and comparing photos and biometric data against government watch lists. The Trump administration has shown it has no qualms about using such lists to label and target activists and anyone it deems an enemy. Last year, Trump signed NSPM-7, an executive order that describes a wide range of political views as “domestic terrorism” to be targeted by law enforcement — specifically including “radical gender ideology,” the administration’s shorthand for anything acknowledging the fact that trans people exist.

Needless to say, using platforms that require you to submit your ID and biometric data to a third-party company may not be an appealing option for many trans people, who already face disproportionate risk of doxxing and targeted violence. Last year, it was reported that Texas was making a list of trans people from the names of those who had requested to change the gender marker on their state ID.

Speaking of Kansas, Albert said that “in a lot of these circumstances, [the government’s] power comes from the ability to weaponize this information against individual people.”

And then there’s the types of content that digital ID checks are restricting. While the stated goal of most of these laws is blocking minors from accessing porn, advocates have warned the definitions of content “harmful to children” are flexible enough to encompass all sorts of supposedly “harmful” materials, from online LGBTQ+ communities to information on birth control. For example, the controversial Kids Online Safety Act (KOSA) has proposed an online censorship regime under a “duty of care” that requires platforms to avoid showing content that is “harmful to minors,” and designates the Trump-appointed Federal Trade Commission (FTC) as the entity that would determine what kinds of material meet that description. (A companion bill, called the Kids Internet and Digital Safety Act, has also advanced in the House of Representatives, though it has notably removed language describing the duty of care.)

“I think it’s fair to say that if you look at the history of obscenity in the US and what’s considered explicit material, stuff with queer and trans material is much more likely to be considered sexually explicit even though it’s not,” said Albert. “You may be in a circumstance where sites with more content about queer and trans people are more likely to face repercussions for not implementing appropriate age-gating or being tagged as explicit.”

For trans people, many of whom discovered community and acceptance for the first time online, this is a major shift. In combination, anti-trans ID and age-gating laws will be blocking access and destroying invaluable resources — leaving few alternatives.

“If you can’t afford a VPN, you’re going to use a free VPN that steals your data, or just not access that site at all,” said Kayyali.

Faced with onerous systems that weren’t designed with them in mind, experts worry that LGBTQ+ site operators and many trans folks attempting to access age-gated websites may decide that it isn’t worth the risk.